Generative AI has quietly made its way into your teams, through the back door. A sales rep summarizing a proposal with ChatGPT, an assistant asking Claude to rephrase an email, a developer pasting code into Mistral to debug it. Most data breaches today don’t stem from sophisticated attacks, but from these seemingly harmless actions. Using these tools without exposing your company requires a real cybersecurity strategy—not just three rules posted on the intranet.

Why Cybersecurity Is a Critical Issue for Small and Medium-Sized Businesses

ANSSI’s 2025 Cyber Threat Landscape is clear: 48% of ransomware victims in France are small and medium-sized enterprises (SMEs), micro-enterprises, or mid-sized companies. In terms of cost, estimates vary depending on the source, but the average cost of an incident is generally around €75,000, rising to several hundred thousand euros when a ransomware attack halts operations for two weeks. And that’s not even counting lost customers, postponed contracts, and the loss of trust among partners.

An alarming level of cyber risk for small and medium-sized businesses

Cybersecurity for small and medium-sized businesses is no longer just about antivirus software and firewalls. Attackers are actively targeting companies with fewer than 250 employees: these companies hold valuable data (customer files, projects, contracts), are less well-equipped than large enterprises, and are more likely to pay ransoms. It’s a rational calculation for ransomware groups.

The current threats are largely predictable: ransomware, AI-assisted phishing, voice deepfakes used in CEO fraud, and now the unintentional exfiltration of data through consumer AI tools.

Here are a few figures to give you an idea of the scale:

- 5,629 reports of personal data breaches were filed with the CNIL in 2024, a 20% increase from 2023 (CNIL Annual Report, April 2025). Massive breaches—those affecting more than one million people—doubled over the same period.

- More than 99% of identity-related incidents involve stolen or compromised passwords (Microsoft Digital Defense Report). If your AI accounts use the same passwords as other services, you know what can happen.

- Most data breaches involve human error or compromised access (Verizon DBIR). Not some exotic technical flaw, just a click at the wrong time.

The NIS2 Directive, which is currently being transposed into French law, will extend security requirements far beyond large companies (see the box below). What was once optional will soon be subject to verification by ANSSI.

The human factor: the primary security vulnerability

A cyberattack rarely begins with a technical feat. It starts with an email sent at 5:45 p.m. on a Friday, opened in a hurry, containing a link that looks like it’s from your bank. Or with an employee who pastes a contract into ChatGPT to save ten minutes, without realizing that the contract is being sent to servers in the U.S. and could be used to train a model.

No intention to cause harm, no gross negligence. Just the desire to get the job done quickly. Except that these actions account for the majority of incidents. And the widespread adoption of free AI tools in employees’ daily lives has created a new category of data leaks: silent, without alerts, and leaving no immediate trace. This is what’s known as Shadow AI, which has become the number one invisible risk for executives in 2026: 78% of employees use AI at work, but more than half don’t tell their managers.

Serious financial and operational consequences

Imagine an accounting firm hit by ransomware in mid-March. All tax returns are encrypted, making it impossible to file them on time. The firm must choose between paying the ransom (with no guarantee) and restoring data from backups that are six months old. Either way, clients will take their business elsewhere.

Or an industrial SME whose production plans are leaked to a competitor through a poorly managed ChatGPT conversation. Ten years of R&D wiped out in two weeks. No easy lawsuit, no obvious recourse: proving the source of the leak is a nightmare.

A data breach can result in a GDPR fine (up to 4% of global revenue), calls from journalists, and the obligation to notify every affected individual. From an operational standpoint, it can take several weeks to return to normal after a serious incident. Cybersecurity and AI security are no longer two separate issues, and that is precisely why a unified approach to cybersecurity and compliancemakes sense.

📋 Sidebar — NIS2: Does this apply to you?

The NIS2 Directive (EU 2022/2555), transposed into French law via the Resilience Bill, is a game-changer. NIS1 covered approximately 500 entities. NIS2 will cover between 10,000 and 15,000 entities in France, divided into two categories:

- Essential entities (EE): 250 or more employees, or €50 million in revenue, in highly critical sectors (energy, transportation, healthcare, water, digital infrastructure, banking, and public administration).

- Significant entities (SE): 50 to 249 employees, or €10 million to €50 million in revenue, in other critical sectors (postal services, waste management, chemicals, agri-food, medical devices, research, digital services).

Timeline: Compliance is expected around October 17, 2026, followed by ANSSI audits. And even if your SME falls below the thresholds, your NIS2 clients will require contractual guarantees from you. You will be affected indirectly.

For more information: Practical NIS2 Compliance Guide for SMEs — Technical Measures and Hardening.

How AI Enhances Cybersecurity for Small and Medium-Sized Businesses

AI isn't just a problem. When implemented properly, it significantly reduces risk by automating tasks that no human team can monitor around the clock. For an SME without a CISO, this is an opportunity to close some of the gap with larger companies.

EDR, XDR, and SOAR: AI-powered security tools

SMB EDR/XDR solutions monitor what’s happening on your endpoints, network, and cloud applications in real time. When unusual behavior occurs (such as an endpoint suddenly encrypting dozens of files), the system responds before a human has even had a chance to read the alert. It’s 24/7 monitoring without having to hire three security analysts.

SOAR platforms take it a step further. Instead of generating hundreds of alerts that no one reads, they sort, prioritize, and automatically trigger remediation actions: isolating the compromised workstation, blocking an account, and alerting the IT team. In practice, this means reducing response times from several hours to just a few minutes, with less strain on teams.

Identity Management and Continuous Authentication Using AI

99% of identity-related incidents start with a compromised password. AI adds a layer of behavioral analysis on top of traditional authentication: if an administrator account logs in from Vietnam at 3 a.m. to download the entire CRM database, the system automatically blocks it.

The same logic applies to the use of external AI tools. If an employee who never uses ChatGPT suddenly sends a thousand queries in ten minutes on topics related to your R&D, the system detects it. This is called continuous authentication: we don’t just verify at login; we verify continuously. Combined with robust identity and access management (IAM/PAM), it’s a real game-changer.

Proactive Detection and Resilience Through Artificial Intelligence

Not all vulnerabilities are created equal. In a fleet of 200 workstations, an automated scan might identify 500 vulnerabilities. AI allows you to classify them by actual criticality: which ones are currently being actively exploited, which ones affect a system exposed to the internet, and which ones are purely theoretical. You fix the 15 that really matter and manage the others over the long term.

When it comes to resilience, the principle is simple: we can never prevent every incident, so we prepare for a recovery. Quarterly backup tests, annual ransomware crisis simulations, and an up-to-date disaster recovery plan. SMEs that follow this process can resume operations within a few hours after an incident. Those that don’t spend several weeks—sometimes several months—doing so.

Security Risks and Challenges Related to AI in Small and Medium-Sized Enterprises

Pasting a client contract into ChatGPT to get a quick summary means sending it to OpenAI: its servers, its databases, and potentially its moderation teams. Consumer AI tools are effective and inexpensive, but their business model relies in part on the data you provide. This isn’t a minor detail—it’s the deal.

ChatGPT, Claude, Mistral: What Risks Do They Pose to Your Sensitive Data?

ChatGPT, Claude, Mistral, Grok, Perplexity, Gemini: these are all cloud services operated by third-party companies, primarily American (with Mistral being the French exception). Whatever you type into the prompt—your questions, pasted documents, and the context you provide—is processed on their servers. Depending on the version used and the terms of service, this data may be used to train the models, accessed by their teams, or retained for several months. Enterprise versions generally offer better contractual guarantees, but they also cost significantly more.

Real-life example: A remote sales representative is drafting a report with Claude and forgets to remove a Social Security number at the bottom of the copied draft. This constitutes personal data transmitted to Anthropic. There was no malicious intent or gross negligence. However, the CNIL must be notified within 72 hours, and the employee in question must also be notified.

📋 Sidebar — AI Act: What the European AI Regulation Changes

Regulation (EU) 2024/1689, known as the AI Act, entered into force on August 1, 2024, with a phased implementation. Three key dates to keep in mind:

- Effective February 2, 2025: prohibited practices (social scoring, behavioral manipulation) and a requirement for AI literacy (Section 4). In practice: anyone who uses an AI tool in a business setting must have received training. This applies to ChatGPT as well as Mistral.

- Effective August 2, 2025: Requirements for providers of general-purpose AI (GPAI) models—transparency, documentation, and compliance with copyright laws.

- Effective August 2, 2026: requirements for high-risk AI systems (HR, credit scoring, biometrics, education, critical infrastructure) and transparency requirements for chatbots and synthetic content.

Penalties: up to €35 million or 7% of global revenue for the most serious violations. Important note: If you use an AI tool to pre-screen resumes or assess a customer’s creditworthiness, you are deploying a high-risk system, and specific requirements will apply starting in August 2026.

Costs, false positives, and compliance: the limitations of AI solutions

AI-powered security solutions aren't magic, and it's best to know that before you buy one. Here are a few things to keep in mind:

- Black boxes and explainability. You won’t always know why the model classified one email as suspicious and another as legitimate. This can be an issue when facing a CNIL audit or a client who asks for an explanation.

- False positives. A poorly configured solution can generate dozens of alerts for a single real threat. After a few weeks, the team automatically ignores them all. That’s what happened at Target in 2013, to cite a well-known example.

- Actual cost. A basic stack (EDR + MFA + backup) for an SME costs tens of thousands of euros. A full XDR/SOAR deployment with a managed SOC can quickly reach an initial cost of €200,000 to €300,000, plus 15% to 20% annually. This should be calculated as the total cost of ownership over three years, not as a one-time purchase.

- Talent shortage. Simply purchasing the tool isn't enough; you need someone who can interpret the results. Such professionals are rare and expensive.

- A mountain of regulations. GDPR, NIS2, DORA, AI Act: regulations are piling up and evolving rapidly. A solution deployed without a legal framework could leave you non-compliant with one regulation while protecting you under another.

These limitations do not mean that we should give up on AI in security. They mean that we need to approach the subject with a realistic budget and serious support, not with the promises found in a sales brochure.

Train employees to reduce human-related risks associated with AI

How much confidential data is sent to AI prompts every week without anyone realizing it? It’s hard to measure precisely, but the honest answer is: a lot. Awareness starts with simple messages, repeated until they become second nature: “What you type into ChatGPT leaves the company, so no customer numbers, no contracts, no personal data.”

In terms of format, simulated phishing campaigns are more effective than boring e-learning modules. A fake email arrives; whether you click on it or not, you immediately receive feedback if you did click. It’s tangible, it’s memorable, and it changes behavior. Note: Since February 2025, the AI Act has required AI training for anyone using these tools in a business setting. Awareness has shifted from “recommended best practice” to “legal requirement.”

Training alone is never enough. You also need technical tools to catch mistakes (DLP, filtering, monitoring) and a team or SOC capable of responding quickly when someone does exactly what we were trying to prevent.

Best Practices for Securing AI in Small and Medium-Sized Businesses

It is possible to use ChatGPT, Claude, or Mistral without exposing your data. It requires a few clear guidelines, some technical tools, and a trained team. Here are the steps in order.

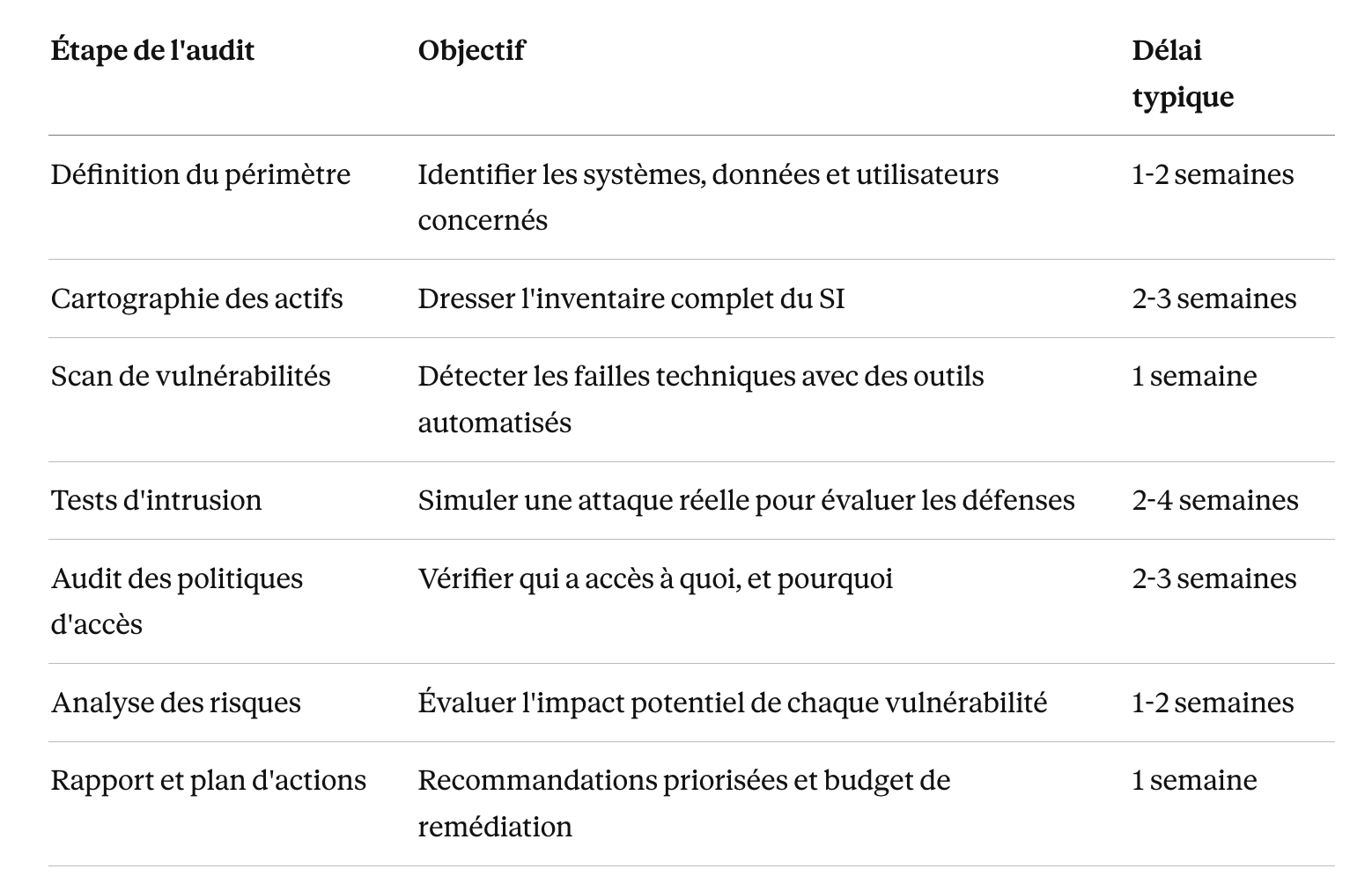

Security Audit and Hardening: The Essential Foundations

It all starts with an IT security audit for small and medium-sized businesses. Not to produce a 200-page report, but to answer simple questions: who has access to what, where is sensitive data stored, which software is up to date, and which accounts have MFA enabled. The audit often reveals surprising things: a network share that’s been open since 2019, a former employee’s account that’s still active, a backup server that’s never been tested.

Next comes hardening the systems: enhanced configurations, removal of unnecessary software, and enabled firewalls. And above all, MFA must be implemented for all privileged accounts. This last point is non-negotiable: properly deployed MFA blocks nearly all simple identity attacks.

An annual audit—or one conducted after any major change to the IT system—ensures that new AI integrations remain compliant with NIS2, GDPR, and the AI Act. Deploying Claude Enterprise in an SME that hasn’t conducted an audit in three years is like installing a sophisticated alarm system on a front door with no lock.

Governance, access, and backups for sustainable security

Three key questions shape the rest of the discussion: who has access to what, how is data protected, and how do we recover it when things go wrong? The first question is addressed by the IAM/PAM combination: IAM manages day-to-day access (who can open which files), while PAM locks down privileged accounts, which are the real targets of attackers.

Next, a few basic practices:

- Define what can be shared with an external AI. Provide a clear list of prohibited data types (identifiers, customer data, contracts, strategic source code), data that is permitted under certain conditions, and approved tools based on the role.

- Encrypt data in transit and at rest. If encrypted data is accidentally sent in a prompt, it remains unreadable. This doesn’t exempt you from the no-sharing rule, but it limits the damage.

- Back up using the 3-2-1 rule: three copies, two different media types, and one off-site copy. And above all, test the restore process every quarter. Many small and medium-sized businesses only discover during a disaster that their backups won’t restore.

Before granting a team access to Claude, Mistral, or Grok, take the time to classify your data: what’s public, what’s internal, what’s confidential, and what’s secret. Then implement a DLP tool that blocks sharing of the latter two categories. It’s less glamorous than defensive AI, but it prevents the majority of incidents.

Sovereignty and European Alternatives: Taking Back Control of Your Data

The choice of tool is just as important as how you use it. Today, there are several options that allow you to use generative AI without your data ever crossing the Atlantic:

- Mistral AI (Le Chat Enterprise): French models, European hosting, and the option for on-premises deployment for the most sensitive use cases.

- Enterprise versions of Claude and ChatGPT: contractual commitment not to use data for training, access management, logging, and enhanced GDPR compliance. These are definitely the better choice over the consumer versions if you’re handling business data.

- AI gateways (Dust, LightOn, or open-source solutions like LiteLLM): a single entry point to multiple models, with upstream DLP rules and log monitoring.

- On-premises deployment or sovereign cloud: for truly sensitive data (strategic R&D, healthcare data, intellectual property), running an open-source model (Mistral, Llama) on OVHcloud or Scaleway eliminates any data transfer to third parties.

The right tool isn't necessarily the most well-known one; it's the one that aligns with your data sensitivity level and regulatory requirements.

Support and training: the keys to a successful cybersecurity strategy

Even the best tools are useless if no one knows how to use them properly. Comprehensive support includes an initial audit, tailored deployment, ongoing monitoring by a SOC, and regular training sessions. Not just an annual session that no one remembers two months later.

Ultimately, the goal is for every employee to understand why they shouldn’t paste a customer email into Mistral—not just to know that they shouldn’t do it. Someone who understands the reasoning behind a rule is more reliable than someone who simply follows it. And it’s often that employee who prevents the incident that no one saw coming.

Frequently Asked Questions

How can small and medium-sized businesses use ChatGPT and Claude safely?

Three steps to take this week, without waiting for a budget or a project: make a clear list of what should never be fed into an external AI system (customer numbers, login credentials, trade secrets, personal data), train your teams using real-world examples from your business, and switch to the Enterprise versions of the tools you use.

Next, implement a DLP solution that automatically blocks obvious data leaks. If the budget allows, a centralized AI gateway or an on-premises deployment for the most sensitive use cases rounds out the security arsenal. A SOC that monitors continuously alerts you to anything that technical tools might miss.

What budget should SMEs set aside for AI security?

The figures vary depending on your height and other factors. As a general guide:

- SMEs with 20 employees: €20,000 to €30,000 to get off to a solid start (audit, awareness training, comprehensive MFA, lightweight EDR, 3-2-1 backup).

- SMEs with 80 employees and sensitive data: €80,000 to €200,000 for a comprehensive solution (XDR, managed SOC, DLP, AI gateway), plus 15% to 20% in annual fees.

- Advanced XDR/SOAR deployment with an in-house SOC: over €300,000, typically reserved for mid-sized companies or highly regulated industries.

Compare this to the cost of an incident (€75,000 on average, and several hundred thousand euros for a ransomware attack that brings operations to a halt) and potential regulatory penalties (up to 4% of global revenue under the GDPR, and up to 7% under the AI Act). To set your budget without overspending, the simplest approach is to start with an IT security audit using a methodology and checklist tailored to small and medium-sized businesses. It will reveal where your true priorities lie.

Should ChatGPT be banned, or should it be integrated in a secure manner?

Banning it doesn't work. Your teams will use the tools on the sly, from their personal phones or accounts created with their personal email addresses. This is what's known as " Shadow AI," and it's unmanageable.

Guided integration is more effective: approved tools, clearly listed authorized data, mandatory training, and technical monitoring. Successful SMEs say, “Yes, here’s how,” rather than “No, that’s not allowed.” It’s also worth noting that AI training has been a legal requirement since February 2025, so the debate is largely settled.

Is my SME affected by NIS2?

You may qualify if both of the following conditions are met: you operate in one of the 18 targeted sectors (energy, healthcare, transportation, water, digital infrastructure, postal services, waste management, chemicals, agri-food, medical devices, digital services, etc.), and you have more than 50 employees or €10 million in revenue.

And even if you fall below the thresholds, be careful: your NIS2 clients will require contractual guarantees regarding your security level. Discovering this requirement after losing a bid, or worse, during a client audit, is a bad way to find out. To prepare with confidence, our practical NIS2 compliance guide details the technical measures to implement (hardening, EDR, auditing) and a five-step action plan.

Main sources: CNIL 2024 Annual Report (published in April 2025), ANSSI/CERT-FR 2025 Cyber Threat Overview, Microsoft Digital Defense Report, Verizon Data Breach Investigations Report, Regulation (EU) 2024/1689 (AI Act), Directive (EU) 2022/2555 (NIS2).

.svg)

.png)

.svg)