ChatGPT, Microsoft Copilot, Claude, Gemini: in just two years, generative AI has gone from being a novelty to a professional tool adopted by most large companies. For an SME looking to take the plunge, the key question is no longer “should we get started?” but “how can we train our teams to derive real value from it, without exposing our data or wasting our licenses?” This guide summarizes what an SME executive or CIO needs to know before launching a generative AI training program in 2026.

In practical terms, what will generative AI look like in 2026?

Generative AI refers to a family of tools capable of generating content (text, images, code, audio, video) based on a simple instruction in natural language. The underlying engine, a large language model (LLM), has been trained on massive amounts of text to learn how to predict the most likely next word in a sequence.

In practical terms, you type “Write a 10-line summary of this meeting report,” and the tool generates a coherent text in just a few seconds. It is this conversational interaction that changed everything between 2022 and 2026: you no longer need to know how to code to use powerful AI.

Three things to keep in mind before moving on:

- Generative AI does not “understand” in the human sense of the word. It produces what statistically resembles a correct answer, which explains why it can “hallucinate”—confidently asserting things that are false.

- Models are improving rapidly, but they are not all-knowing. They have a training cutoff date (often a few months before their public release) and are not aware of current events or your internal data, unless this information is explicitly provided to them.

- The ecosystem has taken shape. In addition to consumer-facing conversational AI assistants, we now see assistants integrated into business software (Copilot in Microsoft 365, Duet in Google Workspace), specialized models, and “AI agents” capable of performing multiple tasks autonomously.

2. Why training your teams in generative AI has become essential

In 2026, giving employees access to generative AI without training them is a bit like handing them a car without a driver’s license: technically possible, but the risk-to-value ratio is disastrous. Three observations from the field explain why generative AI training has become the critical link in any corporate adoption strategy.

First observation: without training, these tools are not used properly. Feedback on Microsoft Copilot and ChatGPT Enterprise deployments shows that a license without accompanying training remains largely underutilized. Employees use them as an enhanced search engine, whereas the real productivity gains come from advanced use cases: summarizing long documents, generating tables, and structured writing, which require a minimum of methodology (known as “prompt engineering”).

Second observation: without training, risks skyrocket. An untrained employee might copy and paste customer data into the free version of ChatGPT, blindly trust the AI’s “hallucinated” responses, or be unaware that the European AI Act now imposes certain transparency requirements. Effective training in generative AI addresses these risks head-on and instills the right habits from the start.

Third observation: without training, shadow AI takes hold. If the company does not provide guidance on its use, employees will organize themselves, using their personal accounts, phones, or unauthorized tools. Paradoxically, AI training is the best way to manage shadow AI rather than fighting it head-on (we’ll return to this in Section 6).

For these three reasons, most of the small and medium-sized businesses we work with now start with a group training session on generative AI, rather than rolling out a standalone tool. The investment is modest (expect to pay €1,200 to €2,000 for a one-day session with a group of 10), and the return on investment is measurable from the very first month.

3. The Real Benefits for an SME: What Feedback from the Field Says

Marketing figures suggest productivity gains of 40% or more. The reality for the small and medium-sized businesses we work with is more nuanced, but remains very positive. Here is what we are actually seeing:

- 30 to 60 minutes saved per day per employee on tasks suitable for AI: drafting emails, summarizing information, searching for internal information, translation, and drafting initial document drafts.

- Significant reduction in setup time for non-recurring tasks—such as preparing a request for proposals, drafting a contract template, or structuring a client brief.

- Improving the quality of written work among employees for whom writing is not their primary job (sales representatives, operations staff, technicians).

- Democratizing data analysis: An AI assistant enables non-data professionals to work with an Excel file, cross-reference data, and generate an initial analysis.

The business functions most affected to date among our SME clients are, in order: legal services (law firms, legal departments), accounting, marketing/communications, sales, and support functions (HR, finance, procurement). Manual and field-based technical roles have, for the time being, been less affected.

4. ChatGPT, Copilot, Claude, Gemini: Which tool should you use to train your teams?

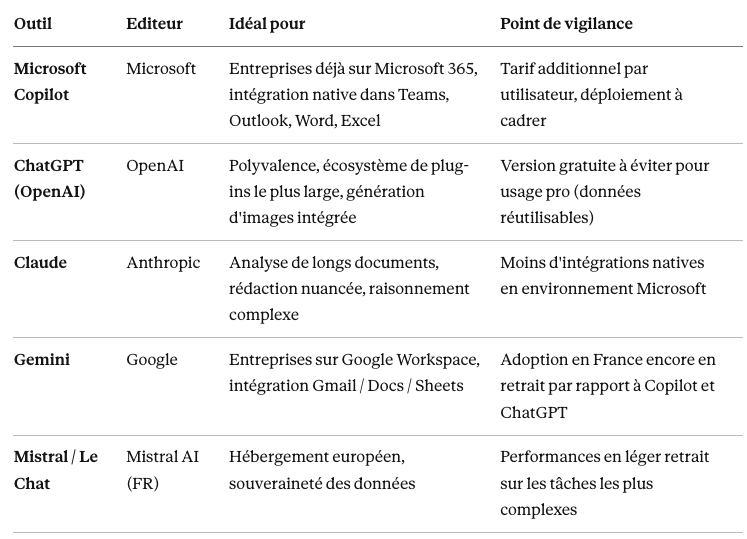

This is the question that comes up in all our scoping sessions. The short answer: it depends on your current tech stack and your privacy requirements. Here is a brief comparison for 2026.

In practice, for most of the small and medium-sized businesses we work with—which are already using Microsoft 365— Copilot is the most natural starting point: it integrates into tools they’re already familiar with, and Microsoft’s commercial contract offers stronger privacy protections than the consumer versions of other tools. That doesn’t preclude mixing and matching: Claude, particularly its Cowork version designed for teamwork, document analysis, and nuanced writing; ChatGPT for creativity; and Mistral for cases where data sovereignty is non-negotiable.

5. The 5 risks that AI training must absolutely address

Generative AI offers real benefits, but it also introduces new risks that no business leader can afford to ignore.

Risk #1 — Data leaks. The classic scenario: an employee copies and pastes a confidential client contract into the free version of ChatGPT to summarize it. The data can then be reused to train the model. With consumer versions, assume by default that anything you send is potentially exploitable.

Risk #2 — Hallucinations. Generative AI sometimes produces false information with complete confidence: fabricated quotes, nonexistent laws, and incorrect figures. This is particularly dangerous in fields where the reliability of information is critical—law, medicine, accounting, and engineering.

Risk #3 — Shadow AI. When a company doesn’t establish any guidelines, employees still use AI—through their personal accounts, their phones, or unauthorized tools. According to several studies, this is now the default situation in most small and medium-sized businesses. It’s better to set clear guidelines than to let things slide.

Risk No. 4 — Industry-specific compliance. For regulated professions (lawyers, certified public accountants, healthcare professionals), the use of generative AI is subject to a specific ethical framework. A law firm that shares information covered by attorney-client privilege with a consumer-facing tool would be committing a serious breach of professional conduct.

Risk No. 5 — Dependency and loss of skills. In the medium term, unmonitored use can lead to an erosion of basic skills—the ability to write without assistance, to structure one’s reasoning, and to memorize. This is more of a management issue than a technological one, but it is worth raising.

6. Doesn't training teams risk exacerbating shadow AI?

This is the most common objection we encounter in coaching, and it deserves a straightforward answer: no, as long as you don’t train in a vacuum.

Let’s start by assessing the actual situation. Shadow AI—the use of AI tools not authorized by the company, accessed via a personal account or outside the IT infrastructure—is not a future risk. It is already a reality in the majority of small and medium-sized businesses in 2026. When employees are surveyed anonymously, we find that a significant proportion of them are already using ChatGPT, Claude, or Gemini on their phones, personal accounts, or in private browser tabs. The real question, therefore, is not “will training create shadow AI?” but “do we regulate practices that already exist, or do we continue to turn a blind eye?” We’ve dedicated an article to the phenomenon of shadow AI and its risks for businesses for those who wish to explore this specific topic further.

A well-designed training program actually works on three fronts to reduce shadow AI rather than amplify it.

First, it assumes that an official tool has already been deployed. The main reason an employee turns to shadow AI is the lack of an authorized tool. When the company provides Copilot M365, ChatGPT Team, or another contractually regulated tool, the employee no longer has any reason to switch to their personal account; the official tool is more convenient, better integrated into the work environment, and already has their work data accessible.

She then explains why certain practices are dangerous. Employees who engage in shadow AI aren’t acting maliciously; they’re simply misinformed. When you show them, in concrete terms, that a client contract copied and pasted into a consumer-facing version can be reused to train the model, their behavior changes. Without training, the prohibition remains abstract; with training, the risk is understood and internalized.

Finally, it establishes a clear and sustainable framework. Each training session systematically concludes with a code of conduct: what is permitted, what is not permitted, in which tool, and for what type of data. This framework stands the test of time because it is understood, not merely imposed.

The real risk is providing training without first deploying an official tool. If you explain to your employees how to leverage generative AI but don’t provide them with structured professional access, you’re effectively turning casual users into enthusiastic users… who will continue using it on their personal accounts. That’s why the sequence matters: official tool first, usage guidelines next, and training last. Training alone is never a good idea—but the tool alone, without training, leads to unused licenses and zero return on investment. The two go hand in hand.

7. GDPR, AI Act, professional confidentiality: what training should cover

The European legal framework has become clearer. Three pieces of legislation will shape the use of AI in businesses in 2026:

- The GDPR continues to apply whenever personal data is processed. Feeding customer, HR, or patient data into generative AI constitutes processing that must be documented and requires a legal basis.

- The European AI Act, which has been phased in since 2024, imposes obligations that vary depending on the level of risk associated with the use. The vast majority of SME applications fall under the "limited risk" (requiring transparency) or "minimal risk" categories, but certain cases—particularly in HR—may fall into categories with stricter obligations.

- Sector-specific obligations (attorney-client privilege, accounting confidentiality, medical confidentiality, and the code of ethics for architects) apply in addition to these and take precedence.

In practical terms, for an SME, this translates into three essential steps: informing employees of the rules of use, selecting tools whose service agreements provide sufficient safeguards (commitment not to reuse data, clear hosting terms, security), and documenting high-risk data processing activities.

8. How much does a generative AI training course cost for businesses?

This is one of the most frequently asked questions during the scoping phase. The budget for a generative AI training program depends on four factors: the format (a half-day introductory session or a full-day workshop), the number of participants, the level of industry-specific customization, and the delivery method (in-person or remote).

For reference, here are the price ranges observed in the French market in 2026:

- Generative AI Awareness Workshop (half-day, up to 10 participants): €600–€900. Ideal format for an initial group orientation without an in-depth workshop.

- Comprehensive Generative AI Training (1 day, up to 10 participants): €1,200–€2,000. The go-to format for helping teams become truly self-sufficient.

- Custom generative AI training (industry-specific in-house training): €2,000 to €3,500 per day. For consulting firms and SMEs with highly specific use cases.

In addition to these training costs, there are tool costs if the company is not yet equipped: €20 to €35 per user per month for a Microsoft 365 Copilot or ChatGPT Team license, in addition to the existing office software subscription.

For an SME with 50 employees that trains and equips half of its workforce, the total budget for the first year typically amounts to €15,000 to €20,000 for software licenses, plus €5,000 to €15,000 for training and project management. Given the observed productivity gains (30 to 60 minutes per day per trained employee), the return on investment is generally positive as early as the first year, provided that the training is delivered and the skills acquired are maintained over time.

9. Sample Curriculum for a Generative AI Training Course for SMEs

A good corporate training program on generative AI isn’t just a lecture on AI. It’s a hands-on workshop where employees learn by doing, using their own tools and working with their own documents. Here is the one-day program we recommend, which has been validated through several sessions delivered to small and medium-sized businesses in 2025–2026.

Morning — Understanding and Choosing. The first half-day covers the basics: what generative AI is, how it works in practice, how to distinguish between ChatGPT, Copilot, Claude, and Gemini, and, most importantly, how to choose the right tool for each use case. This is when fears are put to rest and employees understand what they’re dealing with. A module dedicated to the legal framework (GDPR, AI Act, professional confidentiality, intellectual property) wraps up the morning and instills the right security habits before any hands-on workshop.

Afternoon — Hands-on Practice. The second half-day is entirely devoted to workshops focused on the participants’ specific roles. We work on prompt engineering—the art of formulating a prompt to obtain a reliable and actionable result—and then move on to real-world use cases: drafting emails and reports, literature reviews, meeting preparation, translation, and basic Excel analysis. Each participant leaves with a library of personalized prompts and a 30-day action plan to solidify these new habits.

A well-designed generative AI training program therefore covers four complementary dimensions:

- The basics for demystifying the tools and alleviating concerns

- Prompt engineering for reliable and reproducible results

- Real-world business use cases, based on participants' actual documents

- The security framework and internal usage policies

This is precisely the scope of our Generative AI Training for Businesses, designed for employees with no technical prerequisites, in groups of up to 10 people, delivered in person or remotely at IT Systèmes. This generative AI training can be tailored to your industry—accounting, legal, architecture, manufacturing, or local government—with use cases built around your actual documents.

10. Where to Start: A 5-Step Roadmap

Here is the roadmap we recommend to the small and medium-sized businesses we work with to ensure the successful deployment of generative AI and related training.

Step 1 — Define the scope (2 to 4 weeks). Identify 3 to 5 priority use cases by consulting directly with the teams. Choose the primary tool based on your existing tech stack. Define the minimum usage rules (what is allowed, what is not allowed).

Step 2 — Launch a pilot (4 to 8 weeks). Roll out the initiative in a volunteer department (often marketing or operations). Measure actual gains. Compile a library of prompts that work.

Step 3 — Secure and scale (4 to 6 weeks). Formalize the usage policy. Verify compliance with GDPR and industry-specific regulations. Define the scope of authorized data. Implement the appropriate governance tools (such as Microsoft Purview for M365 environments).

Step 4 — Provide extensive (ongoing) training. This is the step that distinguishes successful deployments from those that stall. One day of generative AI training per employee remains the best investment.

Step 5 — Evolve (continuously). Expand to new use cases. Explore AI agents (capable of automatically chaining together tasks). Adapt governance and training modules as use cases mature.

Generative AI is no longer a five-year plan; it’s a 2026 issue. SMEs that start preparing now will gain a real operational edge over those that wait—not because the technology is magical, but because learning best practices as a group takes time, and the skills acquired are long-lasting.

Three tips to wrap things up:

- Don't wait too long, but don't rush it either. It's better to have a pilot project done right over three months than a botched large-scale rollout in six weeks.

- Invest more in training than in licenses. That’s where the ROI comes into play.

- Get on board before shadow AI takes hold. If your employees don’t have an official tool, they’re already using an unofficial one.

Would you like to discuss this with our experts? We offer an initial 30-minute consultation to help you define your needs and guide you toward the right solution. Contact us or check out our Generative AI Training for Businesses.

.svg)

.svg)